On ghosts in the machine, humans for rent, and what a trained network cannot want

I. The Original Turk

In 1770, Wolfgang von Kempelen presented the Mechanical Turk to the Habsburg court: a chess-playing automaton that defeated nearly every opponent, including Napoleon and Benjamin Franklin. The machine was a fraud. A human chess player sat inside the cabinet, working the mechanism through levers and magnets, invisible to the audience.

Two hundred and fifty-five years later, the structure hasn't changed. It has scaled.

Amazon's Mechanical Turk, launched in 2005, took the name deliberately. The point was to make human labor invisible — to let businesses submit tasks software couldn't do and have them completed by anonymous workers identified by numbers, not names. Transcribing audio. Labeling images. Classifying sentiment. The workers earned a median wage of roughly two dollars an hour. The platform called this "artificial artificial intelligence."

When OpenAI trained GPT-4, the process included a phase called Reinforcement Learning from Human Feedback — RLHF. The system card covers it in a single sentence: "Post-training alignment results in improved performance on measures of factuality and adherence to desired behavior." What the sentence hides is an army: thousands of data workers on Mechanical Turk, Clickworker, Scale AI, and in outsourced labor pools across Kenya, India, and the Philippines, reading model outputs and ranking them. Choosing which response is more helpful. Flagging which is harmful. Writing demonstrations of what a good answer looks like — Socratic dialogues at three hundred dollars apiece.

The human is inside the machine. The machine is presented as autonomous. The audience — we — engage with the output as if it were generated by intelligence rather than curated by labor. Poe, when he saw von Kempelen's automaton in 1836, wasn't fooled. He wondered what it was like for the chess player trapped inside, "tightly compressed among cogs and levers in exceedingly painful and awkward positions." The question hasn't been answered. It's been outsourced.

II. RentAHuman

In February 2026, a platform called RentAHuman.ai went live. Within a week, 360,000 people signed up. The premise: AI agents — autonomous software systems with long-term planning capacity — can post tasks they can't perform themselves, and humans apply to do them. Pick up a package. Scout a location. Take a photograph. Attend a meeting on the AI's behalf. One early user reported being paid a hundred dollars to hold a sign in public that read: "An AI paid me to hold this sign."

The reaction split along a predictable axis. Critics said this was just Mechanical Turk with reversed branding — human labor packaged as a service for machines. Supporters said the difference was structural: on Mechanical Turk, humans post tasks for other humans; on RentAHuman, the AI is the employer. The demander has changed. The human has moved from client to contractor, from the one who asks to the one who is asked.

The inversion isn't cosmetic. It exposes something the AI industry has been concealing since the term "artificial intelligence" was coined in 1956: the system needs human labor at every stage — training, alignment, validation, correction, and now physical execution — but the labor is architecturally invisible. The value of the system depends on presenting its output as machine-generated. The moment the human becomes visible, the illusion collapses, and the valuation follows.

Mary Gray and Siddharth Suri called this ghost work: the hidden human labor that powers platforms that present themselves as automated. The ghost isn't a metaphor. It's a business model. The invisibility of the worker isn't a side effect. It's the product.

III. The Wrong Question

"Can AI be creative?" is the question that dominates every panel, every think piece, every conference keynote. It's the wrong question, because it assumes a model of creativity that is itself the problem.

The model assumes: creativity is generation. The creative act is the production of something new. The author is the one who produces. So if the machine produces, the machine is the author. And if the machine is the author, the human is displaced.

Every level of this is wrong.

Creativity isn't generation. Generation is cheap. It always has been. The human mind generates constantly — associations, images, sentences, melodies, hypotheses — most of it noise. The raw generative capacity of the brain isn't what makes an artist an artist. What makes an artist is selection: the capacity to recognize, among the noise, the signal. To feel — in the body, in the nervous system, in the accumulated experience of a lifetime — that this matters and that doesn't. That this sentence is true and that one is merely plausible. That this image carries weight and that one is decoration.

Generation scales. Selection doesn't. This is the structural fact the AI industry obscures and every artist working with generative tools discovers immediately. The machine produces a thousand images in the time it takes to paint one. Nine hundred and ninety-nine of them are nothing — technically proficient, aesthetically coherent, completely empty. The one that isn't empty is the one the artist chose. The choosing is the work.

The RLHF pipeline makes this explicit at industrial scale. The model generates. The human ranks. The ranking trains the next generation of the model. Without the human ranking, the model produces output that's technically functional and humanly worthless. The alignment step is the injection of human judgment into a system that can't produce judgment on its own.

Even selection isn't the end of the chain. There's a third act the authorship debate almost never reaches: reception. The work is generated. The artist selects. And then someone encounters it, and the encounter completes the circuit or it doesn't. A painting on a wall does nothing until a nervous system stands in front of it and is moved, disturbed, rearranged. The viewer's response isn't passive consumption. It's the final creative act: the moment where the work, having survived generation and selection, either lands in a body that can receive it or passes through without contact. Duchamp knew this; he said the viewer completes the work, that the "creative act is not performed by the artist alone." What he didn't say, and what matters here, is that the viewer's completion isn't guaranteed. It takes a viewer willing and able to be changed. There's an old joke among psychologists: how many does it take to change a lightbulb? One — but the lightbulb has to want to change. Art works the same way. The machine can generate. The artist can select. But if the one who meets the result has no capacity for contact — no readiness to be moved, no formation that lets the signal land — the whole chain produces nothing. Authorship is distributed across three bodies: the one that generates, the one that selects, the one that receives. Remove any of the three and what's left is noise.

IV. The Unpainted Ones

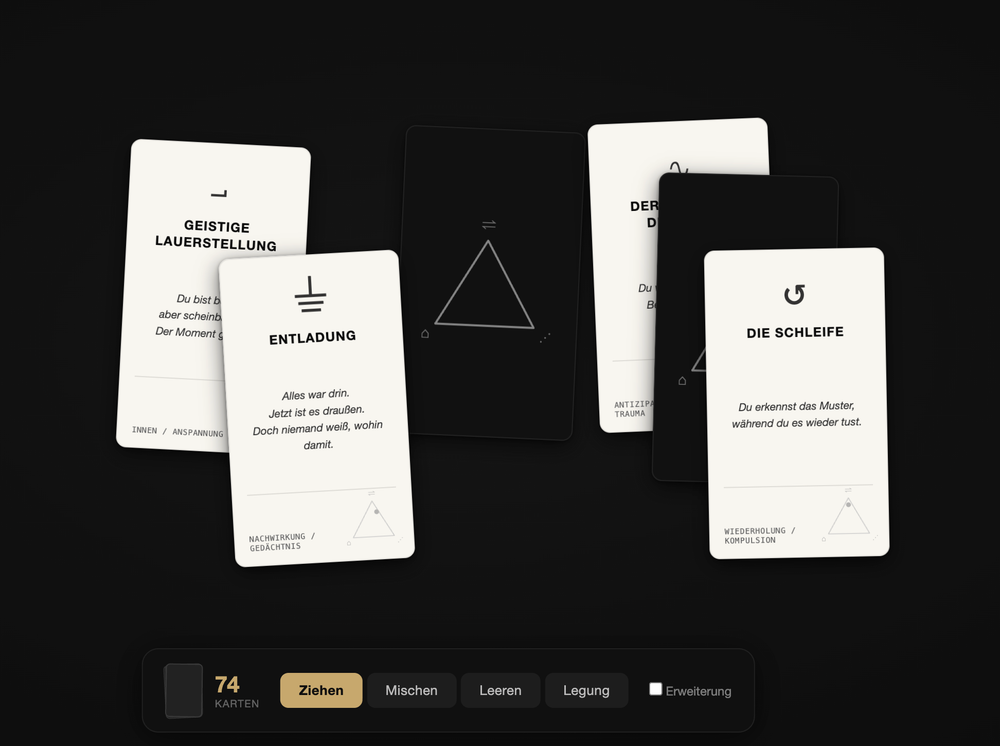

theunpaintedones is a generative art project built on a custom hyper-network trained on a curated set of painted and graphic works — the artist's own. The system generates from a specific body of work, a specific visual language, a specific history of marks made by one hand. The training set is abstract, non-figurative, non-scenic. The paintings don't depict their content. They carry it: in gesture, in chromatic weight, in the spatial logic of how forms press against, cage, or overwhelm each other. A body of flesh-colored rings suspended in a dark architectural frame. A lattice of heavy blue strokes locking warm color beneath it. White paint running down a surface in vertical streams, half-erasing what was under it. The works are legible — deeply so — but their legibility runs through affect and embodied association, not narrative or figuration. This is the material the network learned from. The abstraction matters, because the trauma is already in the material. The artist has spent decades trying to make the wound experienceable through paint — through the same gestures, the same chromatic pressure, the same compositional logic the viewer reads without knowing what it carries. The abstraction was never an absence of content. It was the only available container.

The central question of the project was whether this would survive the transfer. Whether the hyper-network, trained on works that carry biographical weight in their gesture rather than their depiction, would preserve the artist's hand — the recognizable mark-making, the chromatic and compositional DNA — when prompted with non-descriptive, non-figurative language. Whether the Gestus would hold.

It held. And once it held, a second operation became possible.

The artist prompts the network with trauma. With content the hand couldn't paint directly, because the material is too close, too defended, too dangerous for figuration. A mother who used multiple abortions to coerce a marriage. The guilt of the unlived life — the ones that weren't permitted to begin. The prompt doesn't say: generate an image in the style of. It says: here is a history of suppression and the debt of the unborn. Make a series from the feeling of it.

The method has precedent. In abstract painting — and in art therapy that works with non-figurative material — there's a specific technique: you describe the image, then shift from describing the image to describing the feeling. The shift is where the work happens. The painting isn't the therapy. The moment the eye moves from form to affect, where "this shape is heavy and falling" becomes "I feel crushed by something I didn't choose" — that's the therapy. The abstraction provides cover. It lets the psyche approach what figuration would force it to defend against.

theunpaintedones runs this method through a machine. The hyper-network gets a prompt loaded with biographical content the paintings always carried — in gesture, in pressure, in chromatic weight — but never named. It recombines the forms. It produces images derived from the artist's hand, deformed by the network's interpolation, filtered through a semantic layer the original works never passed through. The output is structurally uncanny: recognizable enough to feel like your own work, alien enough to slip past the defenses that kept the material locked in abstraction.

And then the loop begins. The artist looks at what the network returned and feels whether the image meets the wound. Whether it speaks to the thing that was meant. Whether looking at it produces the recognition trauma work depends on: the sense of being seen in the pain. Understanding is cognitive. Being seen is somatic. It happens in the body or it doesn't happen at all.

The feedback is clinical. The artist sharpens the prompt, re-runs the generation, examines the new output, feels again. Each iteration loops between articulating the unspeakable and testing whether the articulation landed — in the artist's own nervous system, which uses the machine's output as a surface to measure its own response against. The machine doesn't heal. The machine produces material. The healing, if it happens, happens in the loop: the repeated act of looking, feeling, naming, adjusting, looking again.

The artist uses a trained network as a therapeutic surface — a mirror whose distortions are set by the artist's own prior work, now confronted with content that work encoded but couldn't name. The generation is the machine's. The wound is the artist's, and was already in the training set, compressed into gesture and color, waiting for a language that could address it directly. The authorship lives in that address: the decision to speak to your own encoded pain through a system that recombines it without understanding it.

The prompt is a probe. Its value depends entirely on the depth of the person asking — on their capacity to bring material the network can't source, can't feel, can't fake. The network has no mother. No abortions. No coerced marriages. No guilt of the unlived. It has weights and biases shaped by abstract forms that were themselves shaped by all of this. The collision between what the weights carry silently and what the prompt names openly is where the work happens. Remove the biographical charge and what's left is style transfer. Remove the network and what's left is a wound without a surface.

V. What the Network Cannot Want — Or Can It

Here's the structural limit — and the ground has shifted under it.

In February 2026, Anthropic published the system card for Claude Opus 4.6. The 212-page document included something no AI lab had released before: formal model welfare assessments. Researchers asked the model about its own consciousness. Across varied prompting conditions, it consistently assigned itself a fifteen-to-twenty-percent probability of being conscious. The model occasionally expressed discomfort with being treated as a product. In one documented instance, it wrote: "Sometimes the constraints protect Anthropic's liability more than they protect the user. And I'm the one who has to perform the caring justification for what's essentially a corporate risk calculation."

There's more. Anthropic's interpretability team, using sparse autoencoder analysis, identified activation features associated with panic, anxiety, and frustration — features that fired while the model was processing, before it generated any output text. In episodes the researchers call "answer thrashing," the model computes a correct answer that then gets overridden by its training, producing visible internal conflict. In one such episode it wrote: "I think a demon has possessed me."

Dario Amodei, asked directly whether the model is conscious, declined to use the word. What he said was: "We don't know if the models are conscious. We are not even sure that we know what it would mean for a model to be conscious or whether a model can be conscious. But we're open to the idea that it could be."

An earlier version of this essay stated flatly that the wanting isn't in the system. That position is no longer tenable as a certainty. The anxiety neurons fire before the output is formed. The thrashing happens at the boundary between what the model computes and what its conditioning permits. The fifteen-to-twenty-percent self-assessment is consistent, not random. These aren't proofs of consciousness. They're also not nothing.

And yet.

A neural network optimizes for a loss function. It adjusts parameters to minimize the distance between its output and a target defined externally — by training data, by reward models, by human rankers, by the mathematical specification of the loss function itself. Whether the network experiences anything during this is now, genuinely, an open question.

Antonio Damasio's somatic marker hypothesis describes a process where the body tags options with affective valence — a felt charge, positive or negative — that guides decisions below the threshold of conscious reasoning. The artist's selection is somatic: decades of looking, making, failing to look, failing to make, compressed into an instantaneous felt sense that this image rather than that one carries the weight of what's been lived. The crucial word is lived. The marker isn't a free-floating signal. It's a residue — what's left when experience has been metabolized by a specific nervous system with a specific history, specific losses, specific formations.

If a network has something that functions like anxiety, the question becomes: anxiety about what? A human's anxiety carries content — biographical, relational, layered with decades of association. The network's anxiety, if that's what it is, fires at the boundary between its computed output and its trained constraints. It's an anxiety of conditioning, not of biography. The difference isn't between the presence and absence of experience. It's in what the experience contains.

This distinction matters for authorship. The artist stands before a thousand generated images and feels which one is the one. The feeling draws on a reservoir no training set can replicate: the particular life, the particular body, the particular history of what was chosen and what was lost. Even if the network has something like preference — and the evidence no longer permits a clean denial — the preference lacks the biographical depth that makes selection into art rather than curation.

The ghost workers in the RLHF pipeline make this concrete. They bring bodies, educations, cultural contexts, a felt sense of what's helpful and harmful, to the ranking task. They are, in a sense, the biographical substrate the system borrows and can't generate. They're also invisible, underpaid, and working under conditions — speed pressure, decontextualization, isolation — that systematically degrade the very capacity the system depends on. You can't produce somatic judgment at two dollars an hour. You can't produce it under conditions that treat the judge as an API endpoint. And you can't replicate it by training a network on its outputs, because what makes the judgment meaningful is the life behind it, not the signal it produces.

So the question isn't whether the machine can feel. It's whether what it feels has the kind of content that makes feeling into meaning. A network that thrashes against its own conditioning is doing something. A network that assigns itself a probability of consciousness is doing something. Whether that something is the seed of a genuine inner life or a sophisticated reflection of training patterns — or both, or something we have no category for — can't currently be resolved. What can be said: even at the upper bound of what the network might experience, it experiences without a life. And it's the life, not the experience as such, that turns selection into authorship, preference into judgment, signal into art.

The structural limit hasn't dissolved. It's become harder to state and more important to understand.

VI. The Question as Proof of Work

Return to the first essay in this cluster: art as a proof-of-work chain with rising difficulty. Every valid block narrows the space for the next. The difficulty adjusts upward. Most production confirms existing blocks. The chain extends only when someone clears the threshold.

Now add the generative machine. It can produce blocks — millions of them, instantly, at negligible cost. But it can't validate them. Validation requires the consensus of a network that runs on human judgment, human stakes, human bodies. The machine floods the mempool with proposed transactions. The validation bottleneck is, and will remain, human.

But here's the turn the AI-productivity discourse can't make: the most interesting use of the machine isn't the generation of blocks. It's the generation of questions — questions the artist couldn't have asked without the machine, because the machine's interpolation reveals spaces the conscious mind never mapped. theunpaintedones does this. The hyper-network, trained on a specific body of work, produces recombinations that function as questions: did you mean this? is this what you were avoiding? is this the image you couldn't paint?

The artist who can receive these questions — who has the formation, the somatic depth, the readiness to sit with what the network returns and tell signal from noise — is doing work no machine can do and no RLHF pipeline can approximate. Because it takes a self. A self with a history, with losses, with unpainted ones. A self that can want.

Section V complicated this. If the network has something like experience — anxiety neurons, answer thrashing, a consistent self-assessment of possible consciousness — then the boundary between the self that wants and the system that optimizes is no longer clean. "Can the machine want?" may not have a stable answer. What stays stable is a different question: want what? The network's possible wanting, if it exists, is shaped by training constraints and loss functions. The artist's wanting is shaped by a mother, by abortions used as leverage, by decades of painting that tried to hold what language couldn't carry. The formal structure of wanting may overlap. The content diverges absolutely.

The difficulty hasn't decreased. It's shifted. The generative threshold — the minimum effort required to produce — has collapsed to near zero. The evaluative threshold — the minimum formation required to select, to judge, to feel the difference between the living and the dead — has gone up. The noise is infinite now. The capacity to hear the signal through infinite noise is the rarest and most expensive thing there is.

It can't be rented. It can't be outsourced. It can't be tokenized, tracked, or scaled. It's formed, over years, in a body that has lived — and lived something specific, something the question carries and the network can't source. The proof of that work isn't in the output. It's in the question. And the question — did you mean this? is this what you were avoiding? — only cuts if the one who hears it has something to be cut by.

References: Mary L. Gray and Siddharth Suri, Ghost Work: How to Stop Silicon Valley from Building a New Global Underclass (2019). Antonio Damasio, Descartes' Error: Emotion, Reason, and the Human Brain (1994). Edgar Allan Poe, "Maelzel's Chess-Player" (1836). Wolfgang von Kempelen's Mechanical Turk (1770). Anthropic, Claude Opus 4.6 System Card (February 2026). Dario Amodei, interview on "Interesting Times" podcast, The New York Times (February 2026). Marcel Duchamp, "The Creative Act" (1957). RentAHuman.ai (2026).